LLM

Generate and transform text with powerful language models

What does this node do?

The LLM (Large Language Model) node lets you generate, transform, and analyze text using AI models like GPT, Claude, or Gemini. It’s the foundation for AI-powered workflows.

Common uses:

- Generate content (articles, summaries, emails)

- Analyze and extract information

- Transform text formats

- Answer questions about data

Quick setup

Add the LLM node

Find it in the node library under AI Nodes → LLM

Write your instructions

Tell the AI what to do. Be specific and clear.

Choose a model

Select from GPT, Claude, Gemini, etc.

Connect and run

Connect inputs and run your workflow

Configuration

Required fields

instructions string required The prompt that tells the AI what to do. This is the most important field.

Tips for good instructions:

- Be specific about what you want

- Provide context and examples

- Specify the output format

- Use variables to include dynamic data

Example:

Summarize the following article in 3 bullet points.

Focus on the main takeaways for marketers.

Article:

{{content}}Optional fields

system_message string Sets the AI’s persona and behavior context.

Example:

You are a senior SEO consultant. Provide specific,

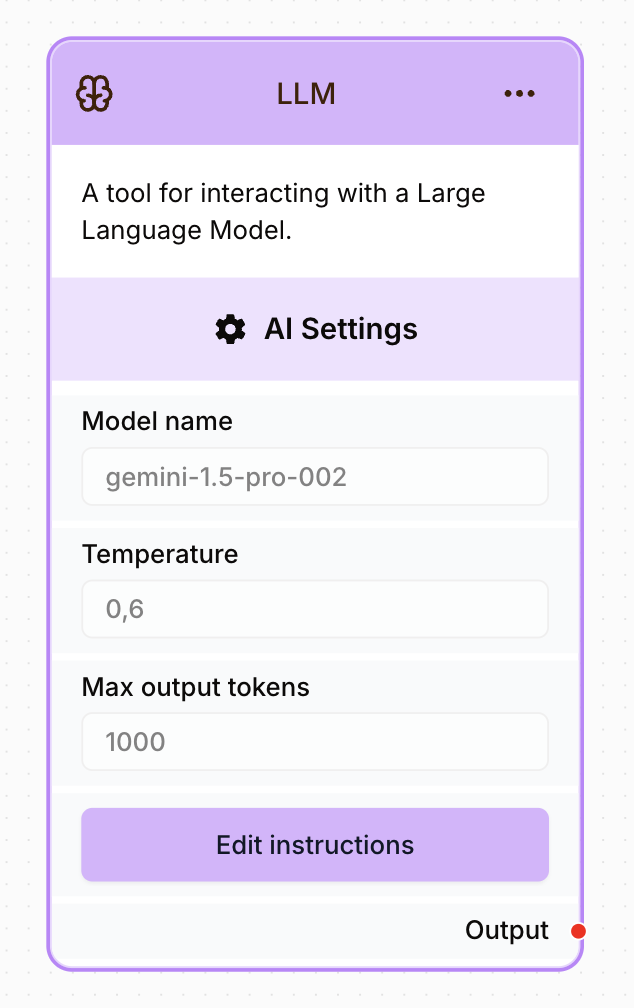

actionable recommendations based on data.AI settings

model string default: gpt-4o The AI model to use.

| Model | Best for | Speed | Cost |

|---|---|---|---|

| GPT | Complex tasks, reasoning | Medium | High |

| Claude | Long content, nuance | Medium | Medium |

| Gemini | Google integration | Fast | Medium |

temperature number default: 0.6 Controls randomness in the output.

| Value | Behavior |

|---|---|

| 0.0-0.3 | Focused, consistent |

| 0.4-0.6 | Balanced (default) |

| 0.7-1.0 | Creative, varied |

max_output_tokens number default: 1000 Maximum length of the response in tokens.

- 500 tokens ≈ 375 words

- 1000 tokens ≈ 750 words

- 4000 tokens ≈ 3000 words

output_json_schema object Define a JSON schema to get structured output.

Example:

{

"summary": "string",

"score": "number",

"recommendations": ["string"]

}Output

The node returns the AI’s response:

{

"response": "The generated text or JSON output...",

"model": "gpt-4o",

"tokens_used": 523,

"finish_reason": "stop"

}Access the response: {{LLM_0.response}}

Examples

Content summarization

Instructions:

Summarize this article in 3 bullet points:

{{content}}

Keep each point under 20 words.Output:

• AI tools are transforming content workflows

• Automation saves 10+ hours per week

• Integration with existing tools is seamlessData extraction

Instructions:

Extract the following information from this company page:

{{content}}

Return as JSON:

{

"company_name": "",

"industry": "",

"employee_count": "",

"products": []

}Output:

{

"company_name": "Acme Technologies",

"industry": "SaaS",

"employee_count": "50-100",

"products": ["Project Management", "Time Tracking"]

}Content generation

Instructions:

Write a professional email to {{contact_name}} at {{company}}.

Context: {{meeting_notes}}

The email should:

- Thank them for the meeting

- Summarize key discussion points

- Propose next steps

- Be under 150 wordsBest practices

Write effective prompts

Summarize this article in exactly 3 bullet points.

Each bullet should be under 20 words.

Focus on actionable takeaways for marketers.

Article:

{{content}}Summarize this:

{{content}}Use system messages

Set context for better results:

System: You are a data analyst. Always provide specific numbers

and cite your sources. Use a professional tone.Request structured output

For processing in subsequent nodes, request JSON:

Analyze this data and return as JSON:

{

"sentiment": "positive|negative|neutral",

"confidence": 0-100,

"key_phrases": ["...", "..."]

}Handle long content

For content that might exceed token limits:

- Chunk content before sending

- Process chunks separately

- Merge results

Common issues

Response is cut off

Increase max_output_tokens. The default may be too low for long outputs.

JSON output is invalid

- Use a JSON schema in settings

- Add “Return only valid JSON” to instructions

- Lower temperature for more consistent formatting

Results are too random

Lower the temperature (0.2-0.4) for more consistent outputs.

Response doesn't follow instructions

- Be more specific in your instructions

- Add examples of expected output

- Use a system message to set context